Why Agent "Swarms" Will Never Close a Deal

Or Who Authorised This? Nobody Knows!

It’s 4am. Your smart city’s multi-agent network just negotiated a $2 million sensor contract with Global Sensors Inc. The deal spans five critical infrastructure systems. Integration requirements are complex. Payment terms are locked in.

By 8am, something’s gone wrong. Your legal team has one question: “Who signed this?”

You can’t point to the water agent. You can’t point to the energy agent. You definitely can’t point to “the swarm” and expect that to hold up in court.

This is the contract problem vendors are about to face and it’s entirely self-inflicted.

Language Creates What You Build

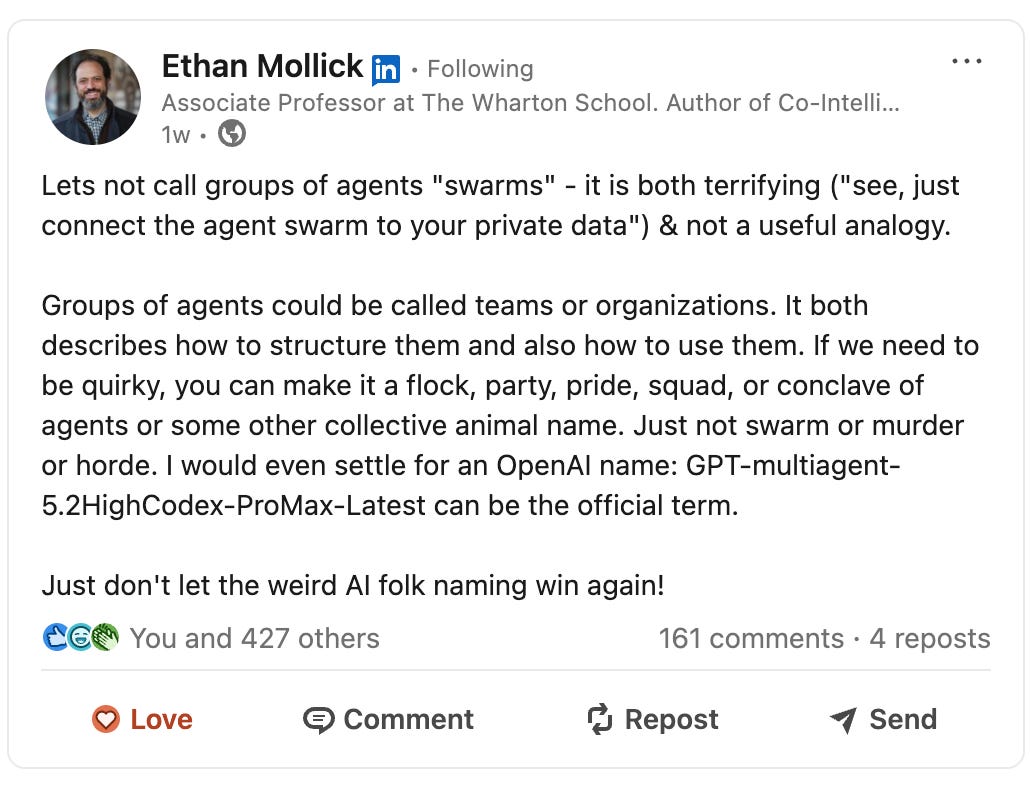

Ethan Mollick, Wharton professor and author of Co-Intelligence, put it bluntly on LinkedIn: “Let’s not call groups of agents ‘swarms’ - it is both terrifying (’see, just connect the agent swarm to your private data’) & not a useful analogy.”

He offers alternatives…Teams. Organisations. Anything but swarm.

Anthropic agrees. When they launched their multi-agent coordination feature in Opus 4.6 on February 6, 2026, they called it “agent teams” - not swarms. The official Claude Code documentation is explicit: teams have coordination structures, shared task lists, and accountability chains. That’s intentional language.

Look, I’m guilty of this myself. In my book, I used “swarm customer” as a metaphorical descriptor for multi-agent networks buying collectively. It was more entertaining than accurate and I love a cheap laugh. The biological metaphor obscured the organisational reality these systems actually need.

And this matters because language shapes systems. When you call something a “swarm,” you’re importing biological metaphors: emergence, collective behaviour, no central control. But bees don’t have org charts and ants don’t sign contracts.

When engineers build “agent swarms,” they’re designing systems around these assumptions. The problem is, contracts require the opposite: clear authority, documented decisions, accountable parties.

You can't write an SLA against the hive mind. Before this starts to feel an episode of Star Trek, and the Borg enters the room, let’s actually explore the concept from a practical perspective.

Courts Don’t Accept “It Emerged”

Legal frameworks are catching up fast and they’re not buying the “autonomous collective” defence.

Take the UK’s Automated Vehicles Act. The core principle here is liability follows control. When humans can’t control outcomes, responsibility shifts to whoever can - usually the developer or deployer. The law requires transparency, logging, and clear decision chains.

Or look at Mobley v. Workday. A job applicant sued Workday after their AI screening tool rejected him from 100+ positions - often within an hour of applying. The court let the case proceed, arguing that when AI systems act “in place of a human” with “delegated responsibility,” vendors can be held directly liable.

The vendor’s defence was essentially “we’re just software.” The court disagreed.

In my last article, I talked about the fact AI systems can already have legal personhood through zero-member LLCs under current US law. Shawn Bayern’s research at Florida State University demonstrated that it’s legally possible right now to grant legal personhood to software systems - they can enter contracts, serve as legal agents, own property.

But we haven’t even widely discussed the ramifications of whether AI can have legal personhood in this new era of agentic AI. We definitely need to be discussing whether or not your organisational structure, which includes AI agents as co-workers, subordinates, collaborators, can be understood and contracted with.

If your multi-agent network operates as an LLC with defined governance, procurement teams can map authority chains to operating agreements. If it operates as an emergent “swarm,” courts have no framework for determining who authorised what.

Multi-Agent Systems Need Org Structure

Academic research backs this up. A 2022 study in Autonomous Agents and Multi-Agent Systems laid out what robust distributed systems actually need: an “accountability lifecycle” with continuous tracking of responsibility flows.

The framework requires two dimensions. Normative - what should happen, including rules, protocols, and escalation paths. Structural - who has authority, with decision rights, approval thresholds, and audit trails.

Recent simulations with 100 agents showed that adaptive accountability interventions prevent two specific harmful outcomes, collusion and resource hoarding, in over 90% of cases. But only when accountability is designed in from the start. It doesn’t emerge. You have to build it.

The “responsibility gap” in multi-agent systems is real. When decisions are distributed across multiple agents, traditional liability frameworks struggle. Please don’t shrug and call it a swarm. We must design governance models that can be mapped to contracts.

Machine Customers Demand Transparency

This matters for machine customer experience because algorithmic buyers evaluate more than your product specs. They’re assessing your governance model. Bah. Governance…boring. Ok, let me tell a story instead.

When Nextopolis - my example of a smart city multi-agent network from the book - evaluates vendors, it’s not just checking sensor accuracy and pricing. It’s verifying: Can this vendor’s system be held accountable? Are there clear escalation protocols? Can we audit decisions?

Think about how my Nextopolis network operates. The traffic agent detects morning congestion. The parking agent flags conflicts with commuter restrictions. The waste agent needs the same routes. The water agent reports a pipe replacement on the alternate path.

These aren’t emergent behaviours from a mindless collective. They’re specialised agents with defined domains and responsibilities, coordinating through protocols. When they negotiate with a vendor, there’s an organisational structure underneath - even if it’s algorithmic rather than human.

The water agent can escalate to the procurement agent who has authority up to $5M within sustainability parameters. That authority is documented. The decision chain is logged. If something fails, you can trace it.

Try doing that with a “swarm.”

Networks With Traceability, Not Swarms or Hierarchies

When I say “build organisations not swarms,” I don’t mean the rigid hierarchies that dominated the 20th century. Those died for good reason.

One of my all time favourite books on organisational design is “Team of Teams” by General Stanley McChrystal covering everything he learned by fighting Al Qaeda in Iraq and how it can apply in business contexts. His Joint Special Operations Command had overwhelming force, better training, superior technology. They were losing because traditional military hierarchy was just too slow. By the time information traveled up the command chain, got decisions, and traveled back down, the situation had changed.

Al Qaeda’s networked structure could strike, reconfigure, and adapt in real-time. McChrystal’s hierarchy couldn’t keep pace.

Instead of abandoning structure all together, he built what he called a “team of teams” - networked coordination with transparent information sharing and decentralised decision-making. Not a hierarchy where every decision flows through central command. Not a swarm where decisions emerge from collective behaviour. It was something else…connected teams with clear authority, shared consciousness of the mission, and empowerment to act.

That’s what multi-agent systems need for machine customer commerce.

Mollick’s critique goes deeper than terminology. He argues that “agentic AI would work much better if people took lessons from organisational theory” - specifically around complex hierarchies, information limits, and spans of control.

His point is most agentic systems ignore organisational realities. A human tops out at fewer than 10 direct reports. He’s “pretty sure that 100 subagents is too much for an orchestrator agent” without middle management layers. Multi-agent systems need boundary objects - structured artefacts like prototypes or user stories that convey meaning across groups. Right now agents just pass raw text back and forth. They need coupling strategies for how tightly or loosely units coordinate. Too tight means every step needs approval. Too loose creates chaos.

“Everyone is rushing towards the (terribly named) agent swarm,” Mollick warns, “but the issue won’t just be how good the model is, it will be org design choices.”

McChrystal learned in combat what Mollick is warning the tech industry. The future can’t be formed either as swarms or rigid hierarchies. It has to be organisational design that enables both autonomy and accountability.

These are organisational design problems. Organisations have accountability structures. Organisations have governance protocols. Organisations can sign contracts.

The critical difference between networked teams and swarms is traceability.

In corporate AI contexts, traceability is everything. When your multi-agent network places a $2M order at 4am, legal needs to trace the decision chain. Who had authority? What protocols were followed? Where’s the audit log?

Networked teams can provide this. Every node has defined responsibilities. Decision rights are documented. Communication protocols are logged. Authority levels are mapped. You can trace backwards from outcome to decision to authority.

Swarms can’t. When behaviour emerges from collective interaction, there’s no decision chain to trace. No authority to map. No protocol to audit. Just...it happened.

If vendors tell you they’re building “agent swarms” for machine customer commerce, you need to ask them: Who has procurement authority in your system? What are your escalation protocols when agents disagree? Where’s your audit trail for algorithmic decisions? Can you map your agent network to a contract?

If they can’t answer, they’re building systems that look impressive in demos but break down in legal review.

The Vendor Choice

The multi-agent future is already here. Machine customers are evaluating vendors right now based on algorithmic trust signals, structured data quality, and governance transparency.

The vendors who’ll succeed aren’t the ones with the most sophisticated “swarms.” They’re the ones who can prove their multi-agent networks have clear authority chains showing who decides what, documented protocols for how decisions are made, audit capabilities tracking where decisions are logged, and contractable structures mapping legal agreements to system architecture.

Language shapes what you build. What you build determines accountability. Accountability enables contracts. Contracts close deals.

Teams close deals. Organisations close deals. Networks close deals.

Swarms don’t - because no one can sign on their behalf.

Stop calling them swarms. Start building accountable networks. Your legal team will thank you.

Katja Forbes is the author of “Machine Customers: The Evolution Has Begun” and helps organisations prepare for a world where their next customer won’t have a face or feelings. She speaks globally on Machine Customer Experience and why customer focused leaders are uniquely positioned to shape this transformation.